How do you assess the reliability of single-item ratings in ESM / EMA?

TL;DR

Single-item measures are everywhere in experience sampling (ESM) / ecological momentary assessment (EMA) because they are fast and low-burden. However, their reliability is usually unknown. A simple within-survey retest provides an intuitive solution: randomly repeat one item and treat any difference as measurement error. Empirical results show that about 27% of within-person variance is noise, roughly 8 points on a 0-100 scale! The good news? You can estimate this directly in your own ESM / EMA study with just a few clicks in m-Path.

If you’ve ever run an experience sampling (ESM) / ecological momentary assessment (EMA) study, you know this trade-off:

You want rich, high-frequency data that capture people’s everyday lives. At the same time, you need to keep participant burden low to maintain compliance.

Single-item measures may be your way out:

✅ They are easy to understand.

✅ They are fast to complete.

✅ They feel close to participants’ lived experience.

As a result, single items have become a default choice for many ESM / EMA researchers.

But there's also a catch. With single-item measures, you cannot assess reliability using standard methods. There is no internal consistency, and classic test-retest does not work when your constructs genuinely change over time.

As a consequence, most ESM / EMA researchers quietly assume that their momentary single-items are reliable enough. 🤫

But is that assumption actually justified? That is exactly what the m-Path team examined in a recent study.

The study at a glance

We recruited 91 participants from the general population for a one-week ESM / EMA study. Each participant got 10 notifications per day, and each time they had to rate 6 positive and 6 negative discrete emotions on a 0-100 slider scale.

The key design element we evaluated was simple, but powerful:

Randomly repeat one emotion item within the same momentary survey.

Because the test and retest ratings are given within mere seconds, it is highly implausible that the person’s true emotional state has changed in between. Any difference between the two responses can therefore be attributed to measurement error.

graphical representation of the test-retest procedure for single-item measures.

What we found

1. Measurement error was substantial.

Across participants and emotion items, repeated ratings differed by about 8 points on the 0-100 scale.

Let that sink in for a moment.

If a participant’s “true” momentary emotion level stays constant, their observed rating can still jump several points up or down purely due to measurement noise.

A concrete example

Imagine a participant answers the item “How happy are you right now?” twice within the same survey.

Nothing meaningful happens in between. No new event. No emotional shift.

Yet the ratings may easily differ by around 10 points.

That difference is not insight. It is noise.

When you rely on hundreds or thousands of such moments for your analyses, this noise quietly shapes your conclusions (for the worse).

When we translated these discrepancies into variance terms, we found that roughly 27% of all within-person variation reflected measurement error variance.

2. Scale position makes a difference.

Not all ratings were equally noisy.

Emotion ratings closer to the ends of the scale showed less measurement error. There is simply less room to deviate when a response sits near zero or the maximum.

In practice, this means that negative emotion items in community samples were often slightly more reliable than positive ones, because they cluster near the lower bound.

3. Personality traits did not matter that much.

Traits like conscientiousness, social desirability, and neuroticism showed small and inconsistent effects.

Compared to the overall size of measurement error, these differences were modest.

Most of the noise comes from the measurement process itself, not from who your participants are.

Why this matters for your research

Our results do not show that single-item measures are unusable. But they do suggest that they are far from perfectly precise.

That matters, because measurement error has predictable and far-reaching consequences for your analyses. It:

❌ Attentuates correlations towards zero.

❌ Produces biased model parameters.

❌ Reduces statistical power.

❌ Increases the risk of false negative conclusions.

Simply ignoring measurement error will not make it disappear. Researchers should measure it to get an indication of it's influence. The good news is that a simple within-survey retest lets you quantify this uncertainty directly, instead of guessing.

Evaluating the reliability of single-item measures in m-Path

So how do you set this up in our platform? Below, we provide a quick guide:

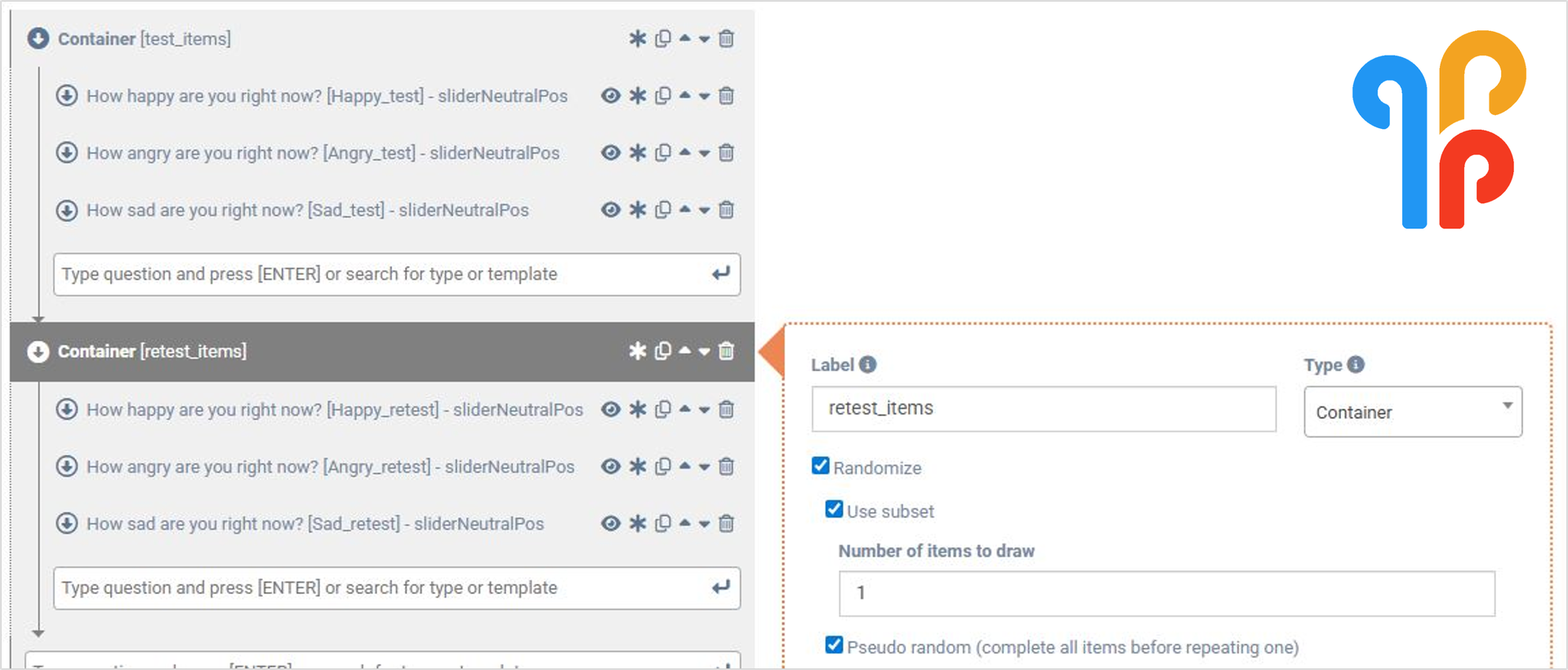

1. Put your single items in a container.

Randomize the presentation order. These will be your test items.

2. Duplicate that container.

The items in this container will serve as your retest pool. Enable a subset of exactly one item and use the pseudo-random selection. This way, different items are retested across momentary assessments, while no single item is repeated too often.

Simple m-Path set-up for the test-retest procedure of single-item measures.

3. Evaluate the average test-retest reliability

Identify all test-retest item pairs, and compute the squared difference per pair. Average across all observations, divide by 2, and take the square root for an interpretation on the original measurement scale.

4. Profit! 🤑

With one extra item per survey, you can now stop guessing about reliability and start measuring it.

Reliability is a prerequisite, not a luxury

Without an empirical estimate of reliability, you cannot tell whether a weak effect is theoretically unimportant or simply masked by noise.

By estimating measurement error directly, you gain a critical reference point. You can judge whether small effects are meaningful or whether your data simply lack precision at the momentary level.

That changes how you interpret both significant and non-significant findings.

Will you keep assuming your momentary measures are reliable, or will you start measuring reliability in your next ESM / EMA study?

Check out our dedicated manual page on containers to learn about the different settings of this item type.

👉 Explore the full paper here: Psychological Assessment, 2022.